I’ve been thinking a little bit about the idea of the singularity, recently. It’s a common tech idea, dating back to at least the 80s. The general premise is that computers make research faster, which lets people make faster computers, which makes research faster, until the process starts automating itself with artificial intelligence and then everything goes faster and faster until infinite technological change happens in finite time.

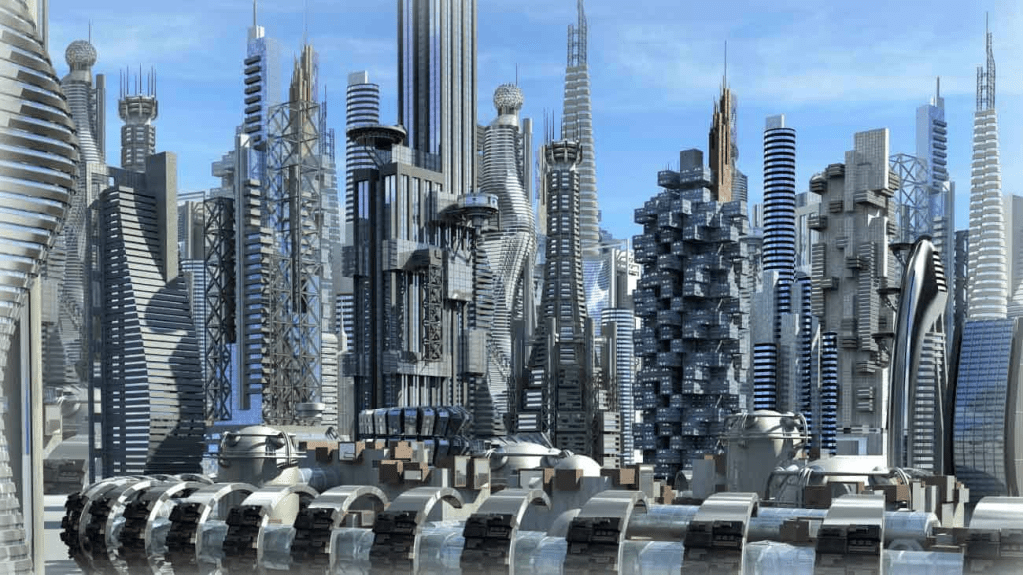

What, exactly, the consequences of this are varies by depiction. It could look like human extinction, with the living inhabitants of the Earth discarded as a part of a machine’s ecdysis. It could look like going to heaven, as machine superintelligence uploads everyone into flawless virtual bodies in a world without hunger, pain, or war. It could look like some combination of the two, as the machines send some to heaven and others to hell, or it could just mean that the AI goes off into the cosmos and leaves us all stranded on Earth.

But, no matter what, one of the key attributes of the “Singularity” is that tech doesn’t need anything else to survive.

Right now, the tech industry could be seen as the crown jewel of the economy. Building the most advanced computers requires an intricate network of trade. The actual processors are fabricated in one of a handful of companies, all American, which take in the actual semiconductor product from Taiwan, where a single company makes a majority of the world’s top chips. But to make those chips, ever more advanced fabrication equipment is needed, the best of which is made by a Dutch company. And then all those machines have their own webs of dependencies, needing advanced machine tools, advanced materials, and advanced microchips—usually produced at the top of the same industrial web. In a technological singularity, one of the key outcomes is that that industrial network gets closed. The self-improving machines do the whole process from start to finish, reconfiguring their own software and hardware and cutting out the enormous and cumbersome industrial network that allows them to exist.

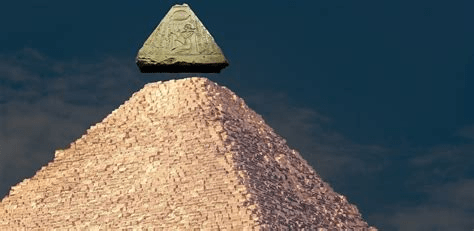

Whether or not this is realistic or even possible is not important. The important thing is that this vision is compelling. The capstone of the pyramid floats without the rest of the stones below it. The tech industry becomes independent, and no longer needs the rest of society, fully taking on the leading role it has assigned itself. In this vision of the singularity, the tech industry fully ephemeralizes the rest of the industrial superorganism.

What does it mean to ephemeralize the industrial superorganism? Ephemeralization is the process of using technology to shrink the equipment needed to do any task, “until you can do everything with nothing” (Buckminster Fuller). The industrial superorganism is the economy, which functions like a superorganism because it integrates many separate parts that are not directly related to each other but must operate together for the whole system to keep existing. I once spoke to someone who described a fighter jet as something like “the blastocyst of a much larger creature, that includes the factories and people that help make more fighter jets”. Every industry is a part of an enormous interconnected web of processes, that allow for each of the industrial components to be (re)produced.

If we think of the singularity as the ephermeralization of the rest of the industrial superorganism, we can then start to think about non-tech singularities.

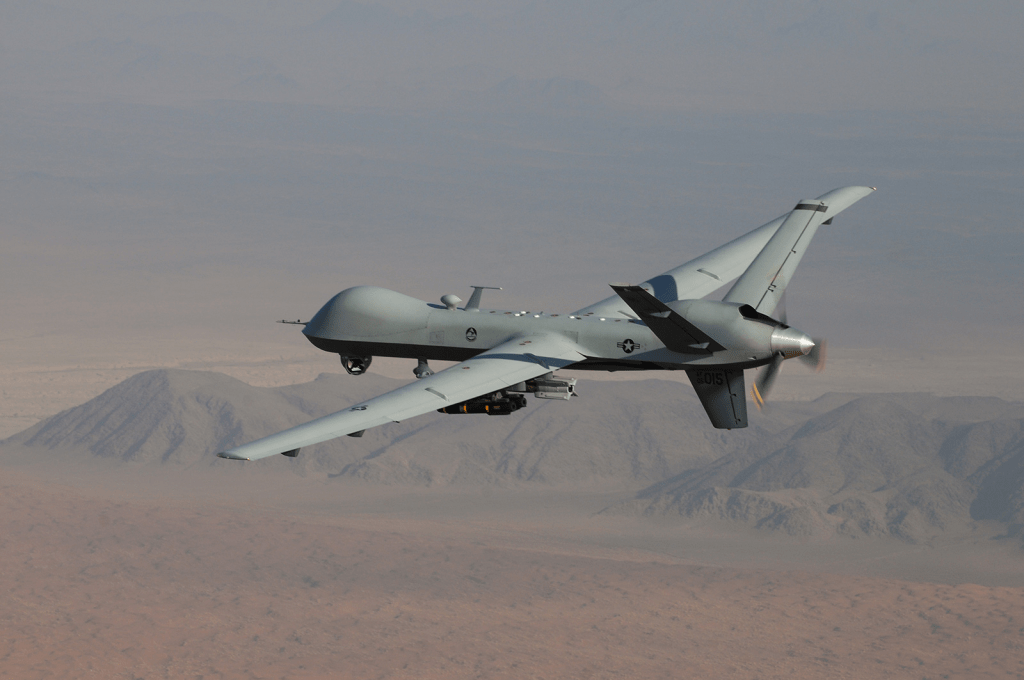

I recently read a 2015 paper by Benjamin Noys, called Drone Metaphysics, that talked about the philosophy and ethics of using military drones. In this paper, Noys notes that the use of a “kill chain” with a series of people ultimately making the decision to kill a group of people on the ground with an attack drone somewhere far away. Increasingly, both in the time of the paper and today, there are efforts to automate this kill chain process, which as Noys notes, leads to many of the people involved shedding at least some of their responsibility in the killing of any giving target, automating not only the actual physical act of killing someone but also the moral act of choosing to kill someone. Noys mentions, and then dismisses, the idea of a “pure war”, where fighting is done autonomously without the involvement of soldiers, noting that drones have their own industrial support network.

But, if we imagine a military singularity, that might look like the “pure war” mentioned briefly in Drone Metaphysics. The military ephemeralizes the rest of the industrial superorganism and is able to supply, equip, and reinforce itself without any outside assistance. Autonomous supercarriers vomit out swarms of robotic death machines and reproduce themselves without human intervention. Military industrial complexes, military recruiting, and military objectives all become obsolete, and wars are fought indefinitely by machines. Military tragedies become natural disasters, with rolling conflicts largely ignoring humanity, except as a territory to be fought over. It might look a little bit like the video game The Forever Winter, or the science fiction short story The Beast Adjoins.

Another type of singularity might be an industrial singularity. Philip K. Dick’s 1955 short story Autofac discusses automated, self-reproducing factories colonizing the planet and displacing humanity, providing piles and piles of random goods while completely eliminating the average person’s autonomy. Any valuable resource is scavenged, including the metal out of light switches and all the world’s oil, leaving humans living in a degraded, post-apocalyptic state. The protagonists heroically work to destroy one of the autofacs, but find that it is full of seeds for more factories—some of them probably launched into space. A more extreme version of this could look like an industrial singularity, where factories spew out goods without needing to seriously take in any outside resources. Unlike Autofac’s relatively primitive tape-controlled factories, nanotechnological factories could be imagined that ingest air and seawater and use nuclear chrysopoeia to create any necessary chemical elements, before emitting whatever goods are desired. This is not that different from a technological singularity, but it is an interesting alternative focus, where there are no superintelligences, just super-factories.

Any field can be imagined to have a singularity. In an agricultural singularity, crops grow without the need for water, fertilizer, or soil. In an architectural one, whole buildings spring up without any need for a supporting construction industry. I imagine that literary and artistic singularities would be somewhat like the usual tech singularity, where machines that make ideas get out of control, but instead of the ideas becoming too intelligent, they become too strange, buried in infinite strata of meaning, of culture and counterculture and a trillion years of artistic experimentation in a single second.

The technological singularity is often described by its evangelists as being something like all of these singularities rolled into one, where the hyperintelligent machines are able to pursue war and industry and art at a higher level than any pathetic baseline human ever could, but I don’t think that this is actually borne out in the depictions of a singularity that I am familiar with.

For example, in Charles Stross’s Singularity Sky, the Eschaton, a godlike superbeing that presides over all of the known universe and polices humanity to prevent apocalyptic events (and competition) is not shown to pursue any of these things at all, besides maybe industry. It uses a combination of human agents and interference with reality on a more fundamental level to achieve its goals, and does not seem to pursue much of anything.

Similarly, the super-enlightened beings of a region of space called the Transcend in Vernor Vinge’s A Fire Upon the Deep are shown to mostly study sciences and interfere with the rest of civilization in a limited way, before mysteriously disappearing. In Neuromancer by William Gibson and the rest of the sprawl trilogy, the superintelligent machines become like gods in the internet, called Loa, but don’t seem to pursue much art or industry, and in Asimov’s The Last Question the godlike supercomputers of the far future restart all of the universe after spending all of their history pondering its nature.

Now, there is no strict reason why a superintelligent being couldn’t be integrated with these other types of singularity, but I think that it might be more interesting to explore these singularities separately. A military singularity where there are no artificial intelligences at the help, just rough military algorithms. Artistic supercomputers that don’t know how to make self-replicating nanomachines, and that can’t break human civilization in an instant, because it’s not what they’re for.

One final type of singularity that should be considered is a spiritual/personal singularity. The spiritual singularity is where a person ephemeralizes the industrial (and biological) superorganism that supports them. This means that the person becomes independent of all manufacturing, ecology, society, and medicine. In my opinion, the spiritual singularity looks a little bit like escaping the cycle of Samsara in Buddhism, where a soul finally breaks free from desire and the self, and exits the universe entirely, or possibly returns to help others as a Bodhisattva—sort of like a friendly supercomputer, like Charles Stross’s Eschaton. I am not a Buddhist (and I’m not that familiar with Buddhism), but I think it is an interesting concept to contemplate.

I think that breaking away from the traditional technological singularity could help us write more interesting science fiction. For a long time, the computer has been the height of technology, and it has been difficult for science fiction authors to consider a world that has been radically reshaped in a way that isn’t based solely around more advanced computing technologies becoming available, but a lot of the predictions that made the singularity seem plausible are breaking down. Once, the singularity could be spoken about with authority, because from the 1960s until somewhat recently, the capability of computer chips doubled every year, a phenomenon described as Moore’s law. The world became linked together in the Internet, and computers went from building-sized government monstrosities to something that everyone had in their home, and then something everyone had in their pocket.

It is easy to see how someone writing in the past could have expected that exponential growth to continue forever, because Moore’s law started to look more and more ironclad, and the influence of computers seemed like it would grow forever. And it has certainly grown, and computers will probably be better in the future than they are today. But Moore’s law wasn’t just a prediction based on studying data. It was also a target for industry. Companies pushed themselves to meet Moore’s law, building more and more advanced integrated circuits to meet this prediction, and now it is extremely difficult to make any improvements in terms of chip miniaturization, meaning that Moore’s law is almost certainly coming to an end. Similarly, instead of enthusiastically putting computer chips in our brains, many people are pushing back on the influence of the smartphone, and nostalgia for a time before the proliferation of personal computers is common. Probably, there will someday be people who have chips in their brains, but it seems like the technology will not suddenly explode outwards and take over. Similar predictions about artificial intelligence have also fallen short, with new “artificial intelligence” large language models like ChatGPT proving to be harder and harder to improve—the opposite of an intelligence explosion, even setting aside that these machines do not exactly think in the way we can easily assume they do.

The technological singularity, once thought to be inevitable, now seems likely to be impossible, and it is also tainted by its association with large tech companies.

Nevertheless, I do not think that writers should discard the idea of singularities altogether. Even if they are implausible, I think that we should use the loss of faith in the idea of the singularity as an opportunity to explore other kinds of singularity, and broaden the scope of possible futures that we consider as science fiction authors.

Leave a comment